Table of Contents

🚦 The Illusion of Control: Why Your Green Dashboards Ship Absolutely Nothing

Nothing calms executive anxiety quite like a fully booked calendar. Open the portfolio dashboard of a typical organization and the utilization metrics glow deep green. Engineers are double-booked across three parallel projects. Product Owners juggle five strategic priority-one initiatives simultaneously. Middle management spends the entire day in endless alignment calls just to manage the red traffic lights on lower levels. Everyone is working at absolute capacity. According to classical business logic, this organization should be an unstoppable force of value creation.

Except it is not. Looking at the actual output, the delivery pipeline is a highly paid parking lot. Story items rot in Jira for quarters. Time to market is effectively dead like a Nokia strategy paper.

When the pipeline chokes, the boardroom reflex is highly predictable. They panic, demand “efficiency,” and shove yet another strategic priority down the funnel to make up for lost time. That is the exact moment the company unplugs its own ventilator. We are still managing 2020s complexity with 1920s Taylorism. An idle belt bleeds money, so the belt must never stop. Optimizing cognitive capacity for 100 percent utilization is not leadership. It is organizational sabotage.

🥶 Corporate Thrashing: When the Operating System Collapses

To understand what happens to an organization under full load, we need to borrow a concept from computer science. Thrashing. When a computer’s memory is full, the operating system starts moving data to the hard drive. If you increase the load further, the system hits a physical breaking point. The CPU suddenly spends 100 percent of its processing power merely shuffling data back and forth. It spends exactly zero percent actually executing programs. The machine freezes. The system burns. At maximum capacity. The throughput drops to zero. Author Seth Godin accurately mapped this exact phenomenon to the workplace, coining the term corporate thrashing. It is what happens when a management team launches too many strategic initiatives in parallel.

In the modern office, “shuffling data” is a highly paid spectator sport. It consists of cross-functional alignment calls, endless status syncs, and the holy grail of corporate paralysis: the steering committee. When every department is running at 100 percent capacity across five parallel top-priorities, every tiny request mutates into a blocking dependency. Because everyone’s calendar is booked solid until Christmas, nobody has ten minutes to review a simple pull request. The result is tragically comical. You pay senior engineers €80,000 a year to sit in meetings for thirty hours a week, presenting beautifully color-coded slide decks that explain exactly why they cannot write any code. The organization’s cognitive CPU is entirely consumed by the meta-work of managing its own gridlock.

Congratulations. You have successfully built an incredibly expensive machine whose sole operational output is keeping its own “Check Engine” light on.

When the machine inevitably freezes, the standard boardroom reflex is a marvel of counterproductive logic. They look at the stalled delivery pipeline and conclude that the teams are simply not working hard enough. The proposed cure? More load. They demand daily status reports to track the inefficiency, establish a new “agile task force” to monitor the gridlock, and shove yet another priority-one initiative down the funnel to make up for lost time. In computer science, when an operating system is thrashing, forcing it to process more data guarantees a fatal crash. In the corporate world, doing the exact same thing usually gets you promoted to Vice President of Operations.

📐 The Physics of Gridlock: Why Little’s Law Always Wins

Look at the fundamental mathematical flaw in management’s logic. Executives love to treat knowledge work like a physical manufacturing plant. In a 1920s Taylorist factory building identical steel widgets, task duration is perfectly predictable, which means you can safely push the conveyor belt close to maximum capacity without breaking the system. But modern product development is not an assembly line. Every single feature is like a prototype that has usually never been built before. The complexity is inherently variable.

Before reaching the exponential cliff of wait times, management must confront the fundamental equation they stubbornly ignore: Little’s Law. Expressed in John Little’s original 1961 operations research notation as L = λ × W, queueing theory dictates a ruthless reality. In the original math, L stands for the Length of the queue (the average number of items in the system), λ (Lambda) represents the average arrival rate, and W stands for the Wait time.

The modern agile world flipped the letters to fit their own acronyms. In the agile adaptation, W now stands for Work in Progress (WIP), λ becomes Throughput, and L becomes Lead Time, creating the formula W = λ × L. If you want to calculate how long an item will actually take to deliver—which is exactly what management constantly asks for—you simply invert this formula to L = W / λ (or Lead Time = WIP / Throughput). The physics remain absolute. It is a mathematical certainty, not a mere suggestion from a junior scrum master.

Little’s Law: Adding more work to a full system only increases waiting time.

Translate that into the reality of a European strategy offsite. If management aggressively shoves new “Priority 1” initiatives into the funnel (λ) while the teams are already fully loaded, the Work in Progress (W) explodes. Because the equation must balance, the Lead Time (L) physically has to stretch. Adding more concurrent projects to a blocked pipeline does not magically increase throughput. It mathematically mandates that every single project will take proportionally longer to finish. You are not accelerating delivery; you are just maximizing the time your capital is tied up in unfinished inventory.

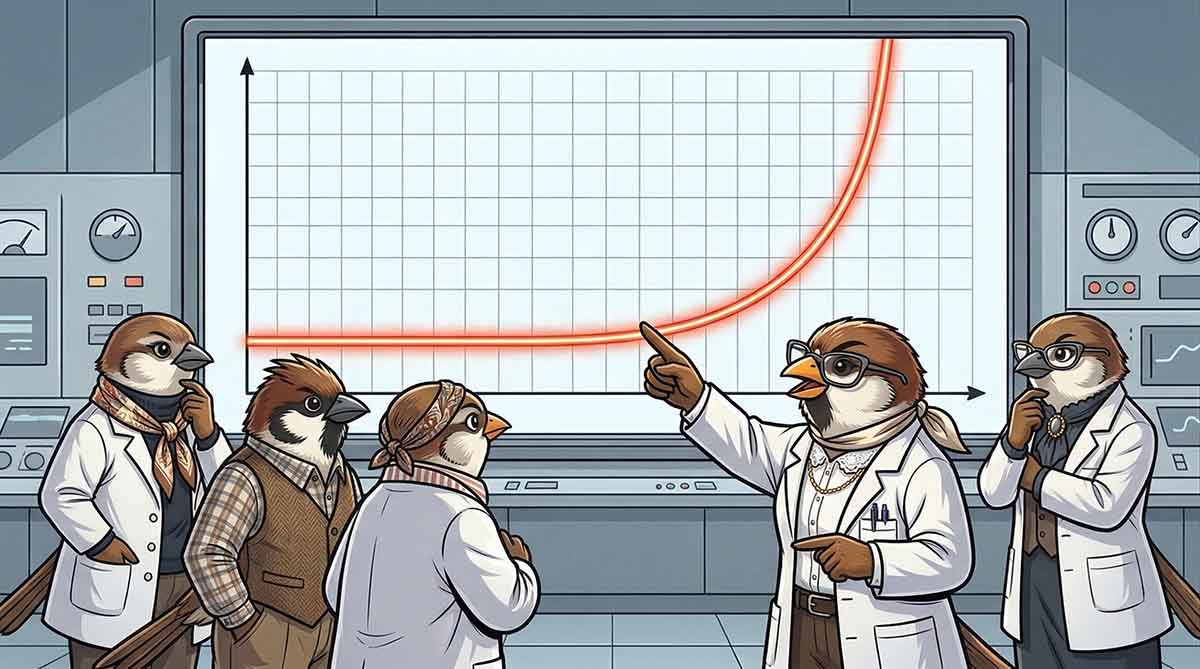

📉 The Exponential Cliff: Kingman’s Formula and the Human Processor

Enter Sir John Kingman and the unforgiving mathematics of queueing theory. The Kingman formula proves that in any system with variable processing times, the relationship between utilization and wait time is not a gentle slope; it is an exponential cliff. When a team is utilized at 50 percent, a new request experiences almost zero wait time. At 80 percent, the queue starts to build. But as management cracks the whip and pushes utilization toward that coveted 100 percent mark, the wait time does not just increase. It goes completely vertical. It approaches infinity.

Kingman’s Curve: Optimizing for 100% busyness guarantees 0% delivery.

In their desperate attempt to ensure that no highly-paid engineer ever experiences a single moment of idle time, management has mathematically mandated that every strategic initiative will spend 90 percent of its lifecycle rotting in a “Waiting for Review” column.

You haven’t built a high-performance delivery engine. You have engineered a highly efficient museum for aging code.

An observant person might spot a paradox here. “Wait, didn’t you just criticize us for treating humans like machines? And now you are using CPU architecture and server queueing theory to make your point?” Yes. I am. Because that is exactly the point. I am using machine mathematics because human beings are infinitely worse at being machines than actual machines. A silicon processor has zero emotions, requires no psychological safety, and never burns out. Well, unless you were playing Amazon’s New World and its uncapped frame rate literally bricked your graphics card.

Its context-switching penalty is measured in nanoseconds. According to cognitive science, a human engineer’s context-switching penalty is exactly 23 minutes and 15 seconds of staring at a screen trying to rebuild their mental model, plus the mounting existential dread of an impending deadline. If pushing a literal, unfeeling piece of metal to 100 percent capacity mathematically guarantees a fatal system crash, what do you think that exact same management philosophy does to a biological human brain? The physics don’t just apply to your employees. The physics hit them ten times harder. And squish them.

When a Linux server runs out of memory due to severe thrashing, the system’s OOM (Out of Memory) Killer brutally terminates processes to save the machine. When a biological human runs out of cognitive RAM, their internal OOM Killer kicks in just as ruthlessly to protect the host. It forcefully terminates their motivation, resulting in cynical detachment, “quiet quitting”, or a sudden, doctor-mandated sabbatical.

🐢 The Reality Check: The Three-Month “Quick Fix”

If you want to watch Kingman’s exponential curve destroy a timeline in the wild, just observe the majestic lifecycle of a trivial Jira ticket. The task is offensively simple: a minor update to a payment API endpoint. Tom pulls the item on a Monday morning. By 11:00 AM, the code is written and the local tests are green. A flawless execution of modern software engineering. The ticket predictably moves to the “Waiting for Code Review” column, blissfully unaware of the bureaucratic hellscape it is about to enter.

In a healthy system with cognitive slack, a teammate picks up the pull request after lunch, reviews the code, and it ships the same day. But this organization optimizes for 100 percent utilization. Sarah, the required reviewer, is double-booked in cross-functional alignment meetings and frantically coding her own “Priority 1” feature. Because her calendar is a solid block of Tetris (before the long one drops), the trivial update sits untouched in the review column for eight days.

When Sarah finally finds a microscopic gap between meetings to review the code, she leaves a single, valid comment about a naming convention. The pull request bounces back. Tom takes exactly five minutes to fix the variable. But because Sarah is now deeply buried in another context, the revised ticket goes straight to the back of her review queue. Another five days pass.

Once finally approved, the code enters “Waiting for QA.” But the centralized testing department is also running at maximum utilization, currently drowning in the manual regression testing of a legacy system. The trivial API update rots in their backlog for three weeks. When it finally clears QA and is ready for production, an entirely unrelated team of overloaded engineers accidentally brings down the shared deployment pipeline. The actual release is delayed by another two weeks.

Ninety days after Tom typed the final line of code, the minor update goes live in production. The actual work time was two hours and ten minutes. The Lead Time was three months.

If you are reading this and thinking, “This is a cartoonish exaggeration, this does not happen in reality,” congratulations. You either work in a startup with under fifty people, or you have simply never looked at your actual metrics. Ask whoever tracks your data to pull the true Lead Time of your lowest-priority tickets. In a scaled corporate matrix, this is not an edge case. It is standard operating procedure. None of these delays were caused by malice, laziness, or incompetence. Every single bottleneck was a perfectly rational, localized decision made by a biologically overwhelmed human trying to survive a 100 percent utilized calendar.

🧠 The Grey Area: The Cognitive Cost of “Staying Busy”

What exactly happens while Tom’s trivial API update sits in the review queue for eight days? Tom doesn’t stare at a blank screen. The corporate spreadsheet demands constant activity. So, to remain 100 percent utilized, Tom pulls another ticket from the backlog. When that second ticket inevitably gets blocked by a dependency, he pulls a third. To the management dashboard, Tom is a high-performing asset handling three critical tasks simultaneously. To cognitive psychologists, Tom is a textbook example of systemic biological failure.

This dynamic also turns your Daily Standup into a daily hostage video, where five exhausted engineers just stare into their webcams and mumble, ‘No blockers, still in progress’, while the board burns down behind them.

Biological Thrashing: The brain is not a multi-core processor. Every forced context switch burns massive amounts of productive time purely on setup costs and leaves behind blocking attention residue.

Tom is not a multi-core processor. When he opens that second Jira ticket, his brain doesn’t just neatly pause the first one. It physically has to unload one complex mental model and load another. The American Psychological Association measured this exact friction: this constant context switching instantly burns up to 40 percent of a worker’s productive time. And the real damage is even worse. You cannot cleanly pause an unresolved problem. It leaves what researchers call “attention residue.” A piece of Tom’s mental RAM remains permanently allocated to the blocked API update. He is now trying to write code for Ticket B while his brain is still subconsciously stuck on Ticket A.

Then, five days later, Sarah finally leaves her single comment on the original pull request. A Slack notification pops up. Tom drops Ticket C to fix the variable in Ticket A. UC Irvine researcher Gloria Mark quantified exactly what happens next: after an interruption like this, it takes an average of 23 minutes and 15 seconds just to regain the original state of deep focus. Multiply this constant state of cognitive whiplash across a fifty-person engineering department running at maximum capacity. You are no longer paying for software development. You are paying top-of-market salaries for biological thrashing.

🛡️ The Defense: Selling Slack to the Spreadsheet

If the physics of slack are undeniable, why does every organization naturally regress to 100 percent utilization? Because a fully loaded calendar looks efficient to a spreadsheet. When a manager walks past a row of desks (or scans a row of green Teams status dots), they don’t see cycle time or flow efficiency. They see busy people typing fast. They see activity. And in the corporate world, visible activity is the primary metric of control. It is much easier to manage the sweat on a developer’s forehead than the actual speed of a deployed feature.

This is why asking your bosses for “twenty percent slack time” feels like a guaranteed suicide mission, career-wise. When an Agile Coach asks for breathing room, the business hears a request for paid vacation. They hear developers wanting to play with shiny new frameworks instead of shipping customer value. To the spreadsheet, the word “slack” translates directly to “waste.”

If you want to change this dynamic, you have to stop using the word “slack.” A pragmatic director does not want to fund an agile philosophy; they want to protect the company’s margins. So you have to translate the physics into their language. When leadership demands more output, you do not ask for breathing room. You pull up the actual data for Tom’s trivial API update and present a simple business case: “Do we want this feature to tie up our capital and block the pipeline for three months, or ship in three days?” You must shift the entire conversation away from individual utilization. You stop defending the developer’s calendar, and you start defending the company’s ability to deliver.

🛑 The Antidote: The Political Danger of Idle Time

Now, inject just 20 percent slack into that exact same organization. Sarah still has meetings, but her calendar has breathing room. When Tom finishes the code, he doesn’t throw a pull request over the wall into a black hole. He simply asks Sarah for a quick review. Because she isn’t drowning in competing “Priority 1” fires, she actually has 15 minutes. They jump on a quick call or sit together. Sarah spots the naming convention issue, and Tom fixes it live. The review takes exactly ten minutes of high-bandwidth, synchronous collaboration.

The ticket moves to QA. Because the testing department also operates with a 20 percent buffer, there is no three-week backlog. A tester picks up the API update that same afternoon. And that fragile shared deployment pipeline? It doesn’t crash. The other engineering team wasn’t frantically rushing a release, meaning they had the slack to actually build proper automated guardrails weeks ago.

The two-hour task is in production the very next morning. Same code. Same people. The only difference is the deliberate mathematical space that allows a system to actually function.

The cure for this mathematical disaster is scientifically trivial, yet politically terrifying. You have to introduce slack. The temporal dimension. Not the SaaS product currently pinging you with a new dependency.

As engineer Donald Reinertsen mathematically proved for product development, you must intentionally lower organizational utilization to a maximum of 80 percent, without prescribing what to do with the idle time. To a traditional executive who learned their trade maximizing factory output, intentionally leaving 20 percent of a highly paid engineering workforce “idle” sounds like a fireable offense. It looks like laziness on a spreadsheet.

But that 20 percent is not wasted time where developers are secretly watching YouTube. It is the cognitive shock absorber for reality. Without it, every single production bug, sick day, or vaguely written Jira ticket instantly becomes a multi-department traffic jam. You can either pay for slack, or you can pay for permanent gridlock. The laws of physics dictate that you cannot choose neither.

You cannot rely on executive self-control to maintain this 20 percent buffer. The moment a director sees an engineer with a spare afternoon, they will instinctively try to fill it with a new strategic initiative. The only way to protect your cognitive shock absorber is a hard, structural constraint: strictly limiting Work In Progress (WIP).

Pioneered in software engineering by David J. Anderson and the Kanban Method, a WIP limit is an absolute cap on the number of active items a team, or an entire department or even organisation, is allowed to handle simultaneously. When the limit is reached, the system mathematically locks. No new “Priority 1” tickets enter the pipeline until an existing item is completely finished, deployed, and off the desk. It forces the organization to finally stop starting, and start finishing.

When a WIP limit mathematically locks the board, a developer who finishes their task is structurally barred from pulling a new Jira ticket. To classical management, this triggers a primal fear: an idle worker. But this is exactly where the 20 percent buffer works its magic.

Instead of blindly starting new code that will inevitably pile up in front of the next blocked column, that developer must look across the board and ask: “Who needs help to get an existing item across the finish line?” They swarm the bottleneck. They perform code reviews, write necessary documentation, or pair-program to resolve a stubborn bug. A strict WIP limit fundamentally forces collaboration over individual busyness. It forces an organization to stop treating its engineers like isolated factory machines, and start optimizing the system for what actually matters: throughput.

📊 Flow Efficiency: Stop Tracking Sweat, Start Tracking Speed

As long as your executive dashboards are built around resource allocation, nothing will change. In the European enterprise, works councils rightfully enforce strict boundaries on how individual tracking data can be used. Robbed of the stopwatch, management retreats to measuring abstract busyness instead: full project allocations, booked person-days, and perfectly distributed FTEs. To protect your delivery speed, you have to completely flip the metric. You must stop measuring whether your engineers are 100 percent assigned to a project, and start measuring how much time a project spends waiting for an engineer.

The metric you are looking for is called Flow Efficiency. The calculation is brutally simple: divide the active work time by the total Lead Time. Look back at Tom’s API update: Two hours of actual work drowned in ninety days of wait time. If you run the math, that specific feature had a Flow Efficiency of significantly less than one percent. For over 99 percent of its lifecycle, your invested capital was not being worked on. It was just sitting in a Jira column, rotting in a queue, waiting for a fully utilized human to finally have fifteen minutes of spare time.

The Flow Efficiency Paradox: Management invests heavily in micromanaging the few hours of active work, while completely ignoring the months of expensive wait time in congested system queues.

When executives see a stalled pipeline, their instinct is to make the individual developer type faster. They buy expensive AI coding assistants hoping to shave ten minutes off a two-hour task. But mathematically, optimizing the one percent of active work is a complete waste of capital if you continue to ignore the 99 percent of wait time. Flow Efficiency forces the boardroom to look at the actual financial leak: the queues. Once you start tracking how long your investments sit idle between overloaded departments, introducing strict WIP limits stops being a radical agile experiment. It becomes a basic fiduciary duty.

A deep-green resource matrix projected onto the boardroom wall is not a triumph of management. It is a mathematically guaranteed traffic jam. When the PMO proudly presents a spreadsheet where every single developer is booked to exactly 100 percent capacity, they haven’t optimized your time-to-market. They have just successfully optimized the reporting. The steering committee gets to feel entirely in control, comfortably oblivious to the fact that they are currently funding the most meticulously documented standstill in the industry.

The moment you replace resource utilization with Flow Efficiency on the executive dashboard, the entire corporate conversation fundamentally shifts. Directors stop asking “who has free capacity?” and start asking “why is this priority ticket waiting?” You finally stop trying to micromanage the individual developer, and you start managing the system. That is the only way you actually buy back your time-to-market.

Here is a benchmark to ruin your next management offsite: the industry average for software development Flow Efficiency is roughly 15 percent. According to Lean practitioners and flow researchers, unoptimized enterprise teams frequently operate between 1 and 5 percent. If your department manages to hit 40 percent, you are not just good; you are operating at an elite, world-class level.

100 percent Flow Efficiency is practically impossible in team-based knowledge work, because complex engineering inherently requires some wait states—waiting for automated build pipelines to finish, deploying to test environments, or simply coordinating two schedules for a synchronous pairing session.

And here is the ultimate paradox: if your dashboard shows that your engineers are 100 percent utilized, Kingman’s formula guarantees your Flow Efficiency is sitting at 2 percent. You are paying top dollar for people to be completely exhausted, while the actual work stands perfectly still.

🏁 The Bottom Line: You Cannot Outmanage Physics

Management in knowledge work is not a battle against human laziness. It is a battle against system physics. As long as the boardroom rewards 100 percent utilization, they are actively funding their own gridlock. They are paying premium salaries to watch Jira tickets age.

The choice is brutally simple. You can either have fully utilized engineers, or you can have fast release cycles. You physically cannot have both. If you want to accelerate your time-to-market, you have to accept that sometimes, a highly paid developer will be staring out the window.

It is high time to finally bury Frederick Winslow Taylor and throw away his 1920s factory playbook. Stop punishing idle time. Start punishing bloated Work in Progress. Stop trying to squeeze every last drop of cognitive capacity out of your teams, and start managing the actual flow of value.

Because physics does not care about your strategic roadmap, your Q3 targets, or your steering committees. And Little’s Law has never lost a fight.

By the way, there is one massive variable we conveniently left out of this equation: the radioactive decay of the work itself. When Tom’s trivial API update sits in a queue for weeks, the rest of the codebase does not patiently wait. It moves on. By the time Sarah finally approves the pull request, Tom cannot simply hit merge. He now has to perform digital necromancy to resolve cascading merge conflicts, turning a five-minute fix into another afternoon of high-risk technical suffering. Add to that the Cost of Delay, the actual unrealized revenue the company bleeds every single day a finished feature sits undeployed. But the compounding interest of code rot and delayed value is enough material for its own entirely separate deep dive.

⏱️ TL;DR: The 30-Second Reality Check

If you are a director running between alignment meetings and only have thirty seconds to understand why your release cycles are collapsing, read this:

- 100% Utilization is System Sabotage: Optimizing your developers for a perfectly booked calendar mathematically guarantees a stalled delivery pipeline. You are maximizing the time your capital sits waiting in queues, not the actual output.

- The Physics are Absolute: Kingman’s formula dictates that a fully loaded system cannot absorb variable knowledge work. Pushing capacity past 80 percent causes Lead Times to grow exponentially to infinity. You cannot outmanage physics.

- Context Switching is Financial Bleeding: Forcing engineers to juggle multiple blocked “Priority 1” tickets destroys up to 40 percent of their cognitive capacity. You are paying top-of-market salaries for biological thrashing.

- The Fix is Counterintuitive: You must intentionally lower organizational utilization to a maximum of 80 percent. This 20 percent slack is the cognitive shock absorber required for the system to actually flow.

- The Tool is WIP Limits: Stop tracking individual busyness and start measuring Flow Efficiency. Introduce strict Work In Progress (WIP) limits to lock the system, forcing teams to collaborate on bottlenecks.

- The Bottom Line: Stop starting, start finishing.

🧾 The Receipts: The Physics and the Data

Physics does not negotiate with management. If your board demands proof that their utilization fetish is guaranteed to stall the company, put these references on the table.

- Corporate Thrashing: To explain what happens to an organization under maximum load, cite Seth Godin’s 2010 book Linchpin (ISBN: 978-1591844099). He originally mapped the computer science concept of operating system memory thrashing to organizational behavior and project overload.

- The Invention of Corporate Slack: To trace the conceptual origin of why pushing knowledge workers to 100 percent capacity destroys agility, cite Tom DeMarco’s seminal 2001 book Slack: Getting Past Burnout, Busywork, and the Myth of Total Efficiency (ISBN: 978-0767907699). Long before modern scaling frameworks existed, DeMarco established that organizations running at maximum utilization lose all ability to respond to change, effectively engineering their own paralysis.

- The Cost of Context Switching: To prove that juggling multiple priority-one initiatives actively destroys cognitive capacity, cite Gerald M. Weinberg’s classic Quality Software Management: Systems Thinking (ISBN: 978-0932633224). He quantified that assigning a senior engineer to three simultaneous projects burns nearly 50 percent of their available time purely on the meta-work of context switching.

- The Mathematics of Gridlock: To back up the claim that running a department at 100 percent capacity halts delivery, reference Donald G. Reinertsen’s The Principles of Product Development Flow (ISBN: 978-1935401001). It is the definitive engineering text proving that managing knowledge work without capacity buffers mathematically guarantees massive delays, establishing the absolute necessity of keeping utilization around 80 percent.

- Organizational Drag: For empirical data on the €80,000 meeting-spectator-sport, cite Michael Mankins and Eric Garton’s research for Bain & Company, published in Time, Talent, Energy (ISBN: 978-1633691766). They calculated that modern enterprises lose massive amounts of their total productive power to “organizational drag”: the endless sludge of alignment calls, steering committees, and meta-management.

- The Mathematics of Infinite Wait Times: To explain why maximizing utilization guarantees zero delivery, cite Sir John Kingman’s foundational queueing theory paper, “The single server queue in heavy traffic” (1961). It contains the mathematical proof that wait times grow exponentially to infinity as utilization approaches 100 percent in variable environments. Read the paper here.

- The 23-Minute Context Switching Penalty: To back up the exact cognitive cost of interrupting a developer, cite the foundational study by Gloria Mark, Daniela Gudith, and Ulrich Klocke from the University of California, Irvine (2008): “The cost of interrupted work: more speed and stress.” The empirical data proves it takes an average of 23 minutes and 15 seconds to return to the original task after an interruption. Read the study here.

- WIP Limits and the Kanban Method: To prove that management cannot self-regulate capacity and requires hard mathematical constraints, cite David J. Anderson’s foundational text, Kanban: Successful Evolutionary Change for Your Technology Business (ISBN: 978-0984521401). Anderson pioneered the application of pull-systems and strict Work In Progress limits in software engineering, coining the operational mandate to “stop starting, and start finishing.”

- The 40 Percent Task-Switching Penalty: To mathematically destroy the illusion that developers can efficiently juggle multiple “Priority 1” tickets, cite the research by Joshua Rubinstein, David Meyer, and Jeffrey Evans published by the American Psychological Association (2001). They proved that the brain’s executive control processes—goal shifting and rule activation—burn up to 40 percent of a worker’s productive time when constantly switching contexts. Read the study here.

- Attention Residue: To explain why a blocked ticket actively degrades a developer’s brain even when they are working on something else, cite Sophie Leroy’s foundational 2009 paper, “Why is it so hard to do my work?” (Organizational Behavior and Human Decision Processes). She proved that humans cannot cleanly “pause” tasks; a cognitive residue remains stuck on the unresolved problem, severely crippling performance on the next task. Read the paper here.

- The Benchmarks of Flow Efficiency: To prove that 100 percent flow efficiency is a mathematical myth, cite researchers Niklas Modig and Pär Åhlström’s foundational 2012 book This Is Lean: Resolving the Efficiency Paradox (ISBN: 978-9198039306), which originally defined the destructive conflict between resource efficiency and flow efficiency. For the specific software industry benchmarks, refer to the empirical data first presented by Lean researchers Zsolt Fabók and Håkan Forss (Lean Agile Scotland, 2012). Their widely accepted data establishes that unoptimized knowledge-work teams typically operate at a flow efficiency of 2 to 5 percent, while 15 percent is a healthy average, and hitting 40 percent places an organization at an elite, world-class level.